Discrete State Markov Chain Equilibrium

A Markov Chain is a sequence of states

where transitions between states occur ordered in time with

the probability of transition depending only on the previous state. Here the

states will be assumed a discrete finite set and time a discrete unbounded set. If the

set of states is given by the probability

that the process will be in state at time

is denoted by , referred to as the distribution.

Markov Chain equilibrium is defined by ,

that is, as time advances

becomes independent of time. Here a solution

for this limit is discussed and illustrated with examples.

Model

The Markov Chain model is constructed from the set of states ordered in time. The process starts at time with state . At the next step, , the process will assume a state with probability since it will depend on the previous state as defined for a Markov Process. At the next time step the process state will be with probability, since by definition the probability of state transition depends only upon the state at the previous time step. For an arbitrary time the transition from a state to a state will occur with probability, that is independent of . Transition probabilities have the form of a matrix,

will be an matrix where is determined by the number of possible states. Each row represents the Markov Chain transition probability from that state at time and the columns the values at . It follows that,

Equation is the definition of a Stochastic Matrix.

The transition probability for a single step in the Markov Process is defined by . The transition probability across two time steps can be obtained with use of the Law of Total Probability,

where the last step follows from the definition of matrix multiplication. It is straight forward but tedious to use Mathematical Induction to extend the previous result to the case of an arbitrary time difference, ,

It should be noted that since is a transition probability it must satisfy,

To determine the equilibrium solution of the distribution of states, , the distribution time variability must be determined. Begin by considering an arbitrary distribution at , which can be written as a column vector,

since it is a probability distribution must satisfy,

The distribution after the first step is given by,

where is the transpose of . Similarly, the distribution after the second step is,

A pattern is clearly developing. Mathematical Induction can be used to prove the distribution at an arbitrary time is given by,

or as a column vector,

Where and are the initial distribution and the distribution after steps respectively.

Equilibrium Transition Matrix

The probability of transitioning between two states and in time steps was previously shown to be stochastic matrix constrained by equation . The equilibrium transition matrix is defined by, This limit can be determined using Matrix Diagonalization. The following sections will use diagonalization to construct a form of that will easily allow evaluation equilibrium limit.

Eigenvectors and Eigenvalues of the Transition Matrix

Matrix Diagonalization requires evaluation of eigenvalues and eigenvectors, which are defined by the solutions to the equation, where is the eigenvector and eigenvalue. From equation it follows,

Since is a stochastic matrix it satisfies equation . As a result of these constraints the limit requires,

It follows that . Next, it will be shown that and that a column vector of with rows, are eigenvalue and eigenvector solutions of equation ,

where use was made of the stochastic matrix condition from equation , namely, .

To go further a result from the Perron-Frobenius Theorem is needed. This theorem states that a stochastic matrix will have a largest eigenvalue with multiplicity 1. Here all eigenvalues will satisfy .

Denote the eigenvector by the column vector,

and let be the matrix with columns that are the eigenvectors of with from equation in the first column,

Assume that is invertible and denote the inverse by,

If the identity matrix is represented by then . Let be a diagonal matrix constructed from the eigenvalues of using ,

Sufficient information about the eigenvalues and eigenvectors has been obtained to prove some very general results for Markov Chains. The following section will work through the equilibrium limit using the using these results to construct a diagonalized representation of the matrix.

Diagonalization of Transition Matrix

Using the results obtained in the previous section the diagonalized form of the transition matrix is given by,

Using this representation of an expression for is obtained,

Evaluation of is straight forward,

Since in the limit it is seen that,

so,

Evaluation of the first two terms on the righthand side gives,

It follows that,

Finally, the equilibrium transition matrix is given by,

The rows of are identical and given by the first row of the inverse of the matrix of eigenvectors, , from equation . This row is a consequence of the location of the eigenvalue and eigenvector in and respectively. Since is a transition matrix,

Equilibrium Distribution

The equilibrium distribution is defined by a solution to equation that is independent of time.

Consider a distribution that satisfies,

It is easy to show that is an equilibrium solution by substituting it into equation .

Relationship Between Equilibrium Distribution and Transition Matrix

To determine the relationship between and , begin by considering an arbitrary initial distribution states with elements,

where,

The distribution when the Markov Chain has had sufficient time to reach equilibrium will be given by,

since, ,

Thus any initial distribution will after sufficient time approach the distribution above. It follows that it will be the solution of equation which defines the equilibrium distribution,

Solution of Equilibrium Equation

An equation for the equilibrium distribution, , can be obtained from equation , where is the identity matrix. Equation alone is insufficient to obtain a unique solution since the system of linear equations it defines is Linearly Dependent. In a linearly dependent system of equations some equations are the result of linear operations on the others. It is straight forward to show that one of the equations defined by can be eliminated by summing the other equations and multiplying by . If the equations were linearly independent the only solution would be the trivial zero solution, . A unique solution to is obtained by including the normalization constraint, The resulting system of equations to be solved is given by,

Example

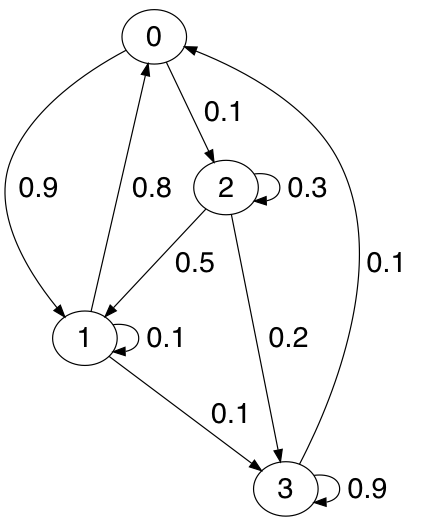

Consider the Markov Chain defined by the transition matrix, The state transition diagram below provides a graphical representation of .

Convergence to Equilibrium

Relaxation of both the transition matrix and distribution to their equilibrium values

is easily demonstrated with the few lines of Python executed within ipython.

In [1]: import numpy

In [2]: t = [[0.0, 0.9, 0.1, 0.0],

...: [0.8, 0.1, 0.0, 0.1],

...: [0.0, 0.5, 0.3, 0.2],

...: [0.1, 0.0, 0.0, 0.9]]

In [3]: p = numpy.matrix(t)

In [4]: p**100

Out[4]:

matrix([[0.27876106, 0.30088496, 0.03982301, 0.38053097],

[0.27876106, 0.30088496, 0.03982301, 0.38053097],

[0.27876106, 0.30088496, 0.03982301, 0.38053097],

[0.27876106, 0.30088495, 0.03982301, 0.38053098]])

Here the transition matrix from the initial state to states time steps in the future is computed using equation . The result obtained has identical rows as obtained in equation .

For an initial distribution the distribution after time steps is evaluated using,

In [5]: c = [[0.1],

...: [0.5],

...: [0.35],

...: [0.05]]

In [6]: π = numpy.matrix(c)

In [8]: π.T*p**100

Out[8]: matrix([[0.27876106, 0.30088496, 0.03982301, 0.38053097]])

Here an initial distribution is constructed that satisfies . Then equation is used to compute the distribution after time steps. The result is that expected from the previous analysis. In the equilibrium limit the distribution is the repeated row of the equilibrium transition matrix, namely,

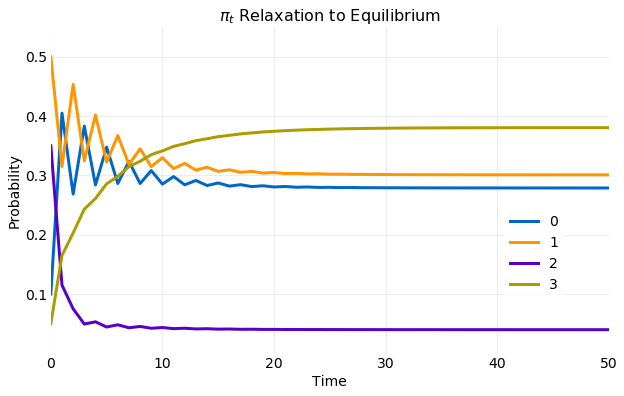

The plot below illustrates the convergence of the distribution from the previous example. In the plot the components of from equation are plotted for each time step. The convergence to the limiting value occurs rapidly. Within only steps has reached limiting distribution.

Equilibrium Transition Matrix

In this section the equilibrium limit of the transition matrix is determined for the example Markov Chain

shown in equation .

It was previously shown that this limit is given by equation . To evaluate

this equation the example transition matrix must be diagonalized.

First, the transition matrix eigenvalues and eigenvectors are computed using the numpy linear

algebra library.

In [9]: λ, v = numpy.linalg.eig(p)

In [10]: λ

Out[10]: array([-0.77413013, 0.24223905, 1. , 0.83189108])

In [11]: v

Out[11]:

matrix([[-0.70411894, 0.02102317, 0.5 , -0.4978592 ],

[ 0.63959501, 0.11599428, 0.5 , -0.44431454],

[-0.30555819, -0.99302222, 0.5 , -0.14281543],

[ 0.04205879, -0.00319617, 0.5 , 0.73097508]])

It is seen that is indeed an eigenvalue,

as previously proven and that other eigenvalues have magnitudes less than .

This is in agreement with Perron-Frobenius Theorem. The numpy linear algebra library normalizes the

eigenvectors and uses the same order for eigenvalues and eigenvector columns. The eigenvector

corresponding to is in the third column and has all components equal.

Eigenvectors are only known to an arbitrary scalar, so the vector of used

in the previous analysis can be obtained by multiplying the third column by .

After obtaining the eigenvalues and eigenvectors equation is evaluated

after time steps and compared with the equilibrium limit.

In [12]: Λ = numpy.diag(λ)

In [13]: V = numpy.matrix(v)

In [14]: Vinv = numpy.linalg.inv(V)

In [15]: Λ_t = Λ**100

In [16]: Λ_t

Out[16]:

array([[7.61022278e-12, 0.00000000e+00, 0.00000000e+00, 0.00000000e+00],

[0.00000000e+00, 2.65714622e-62, 0.00000000e+00, 0.00000000e+00],

[0.00000000e+00, 0.00000000e+00, 1.00000000e+00, 0.00000000e+00],

[0.00000000e+00, 0.00000000e+00, 0.00000000e+00, 1.01542303e-08]])

In [17]: V * Λ_t * Vinv

Out[17]:

matrix([[0.27876106, 0.30088496, 0.03982301, 0.38053097],

[0.27876106, 0.30088496, 0.03982301, 0.38053097],

[0.27876106, 0.30088496, 0.03982301, 0.38053097],

[0.27876106, 0.30088495, 0.03982301, 0.38053098]])

First, the diagonal matrix of eigenvalues, , is created maintaining the order of . Next, the matrix is constructed with eigenvectors as columns while also maintaining the order of vectors in . The inverse of is then computed. can now be computed for time steps. The result is in agreement with the past effort where the limit was evaluated giving a matrix that contained a in the component corresponding to the position of the component and zeros for all others. Here the eigenvectors are ordered differently but the only nonzero component has the eigenvalue. Finally, equation is evaluated and all rows are identical and equal to evaluated at , in agreement with the equilibrium limit determined previously and calculations performed in the last section shown in equations and .

Equilibrium Distribution

The equilibrium distribution will now be calculated using the system of linear equations defined by equation . Below the resulting system of equations for the example distribution in equation is shown.

This system of equations is solved using the least squares method provided by the numpy linear

algebra library, which requires the use of the transpose of the above equation.

The first line below computes it using equation .

In [18]: E = numpy.concatenate((p.T - numpy.eye(4), [numpy.ones(4)]))

In [19]: E

Out[19]:

matrix([[-1. , 0.8, 0. , 0.1],

[ 0.9, -0.9, 0.5, 0. ],

[ 0.1, 0. , -0.7, 0. ],

[ 0. , 0.1, 0.2, -0.1],

[ 1. , 1. , 1. , 1. ]])

In [20]: πe, _, _, _ = numpy.linalg.lstsq(E, numpy.array([0.0, 0.0, 0.0, 0.0, 1.0]), rcond=None)

In [21]: πe

Out[21]: array([0.27876106, 0.30088496, 0.03982301, 0.38053097])

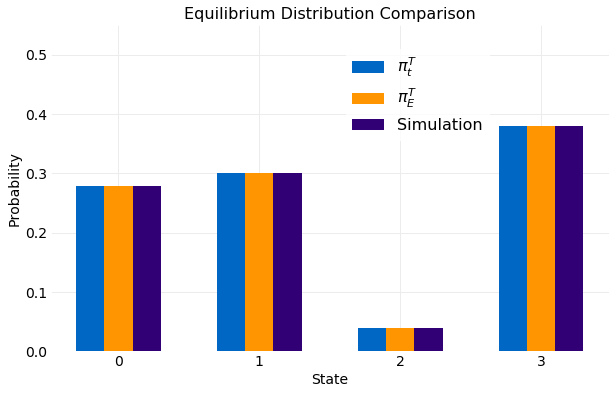

Next, the equilibrium distribution is evaluated using the least squares method. The result obtained is consistent with previous results shown in equation .

Simulation

This section will use a direct simulation of equation to calculate the equilibrium distribution and compare the result to those previously obtained. Below a Python implementation of the calculation is shown.

import numpy

def sample_chain(t, x0, nsample):

xt = numpy.zeros(nsample, dtype=int)

xt[0] = x0

up = numpy.random.rand(nsample)

cdf = [numpy.cumsum(t[i]) for i in range(4)]

for t in range(nsample - 1):

xt[t] = numpy.flatnonzero(cdf[xt[t-1]] >= up[t])[0]

return xt

# Simulation parameters

π_nsamples = 1000

nsamples = 10000

c = [[0.25],

[0.25],

[0.25],

[0.25]]

# Generate π_nsamples initial state samples

π = numpy.matrix(c)

π_cdf = numpy.cumsum(c)

π_samples = [numpy.flatnonzero(π_cdf >= u)[0] for u in numpy.random.rand(π_nsamples)]

# Run sample_chain for nsamples for each of the initial state samples

chain_samples = numpy.array([])

for x0 in π_samples:

chain_samples = numpy.append(chain_samples, sample_chain(t, x0, nsamples))

The function sample_chain performs the simulation and uses

Inverse CDF Sampling on the

discrete distribution obtained from the transition matrix defined by equation .

The transition matrix determines state at step from the state at step

. The following code uses sample_chain to generate and ensemble of

simulations with the initial state also sampled from an assumed initial distribution.

First, simulation parameters are defined and the initial distribution is assumed to be uniform.

Second, π_nsamples of the initial state are generated using Inverse CDF sampling with the

initial distribution. Finally, simulations of length nsamples are performed for each initial state.

The ensemble of samples are collected in the variable chain_samples and plotted below. A comparison

is made with the two other calculations performed. The first is for

shown in equation and the second

the solution to equation . The different

calculations are indistinguishable.

Conclusions

Markov Chain equilibrium for discrete state processes is a general theory of the time evolution

of transition probabilities and state distributions. It has been shown that equilibrium

is a consequence of assuming the transition matrix and distribution vector are both stochastic.

Expressions were derived for the time evolution of any transition matrix and distribution and

the equilibrium solutions were then obtained analytically by evaluating the limit

. A calculation was performed using the obtained

equations for the equilibrium solutions and the numpy linear algebra libraries

using an example transition matrix. These results were compared to ensemble simulations.

The time to relax from an arbitrary state to the equilibrium distributions was shown to occur

within time steps.