Discrete Cross Correlation Theorem

The Cross Correlation Theorem is similar to the more widely known Convolution Theorem. The cross correlation of two discrete finite time series and is defined by,

where is called the time lag. Cross correlation provides a measure of the similitude

of two time series when shifted by the time lag. A direct calculation of the cross correlation using the

equation above requires operations. The Cross Correlation Theorem

provides a method for calculating cross correlation in

operations by use of the

Fast Fourier Transform. Here the theoretical

background required to understand cross correlation calculations using the Cross Correlation Theorem is discussed.

Example calculations are performed and different implementations using the FFT libraries in numpy compared.

The important special case of the cross correlation called Autocorrelation is addressed in the final section.

Cross Correlation

Cross Correlation can be understood by considering the Covariance of two random variables, and . Covariance is the Expectation of the product of the deviations of the random variables from their respective means,

Note that and if and are Independent Random Variables . If the Covariance reduces to the Variance,

These two results are combined in the definition of the Correlation Coefficient,

The correlation coefficient has a geometric interpretation leading to the conclusion that . At the extreme values and are either the same or differ by a multiple of . At the midpoint value, , and are independent random variables. If follows that provides a measure of possible dependence or similarity of two random variables.

Consider the first term in equation when a time series of samples of and of equal length are available,

if is sufficiently large. Generalizing this result to arbitrary time shifts leads to equation .

An important special case of the cross correlation is the autocorrelation which is defined a the cross correlation of a time series with itself,

Building on the interpretation of cross correlation the autocorrelation is viewed as a measure of dependence or similarity of the past and future of a time series. For a time lag of ,

Discrete Fourier Transform

This section will discuss properties of the Discrete Fourier Transform that are used in following sections. The Discrete Fourier Transform for a discrete periodic time series of length , , is defined by,

where the expression for is referred to the inverse transform and the one for the forward transform.

Linearity

Both the forward and inverse transforms are Linear Operators. An operator, , is linear if the operation on a sum is equal the sum of the operations,

To show this for the forward transform consider , then,

Similarly it can be shown that the inverse transform is linear.

Periodicity

Periodicity of the forward transform implies that, where . To show that this is true first consider the case ,

where the second step follows from, .

For an arbitrary value of ,

since, .

Consequence of Real

If is real then , where denotes the Complex Conjugate. It follows that,

Another interesting and related result is,

which follows from,

Orthogonality of Fourier Basis

The Discrete Fourier Basis is the collection of functions,

where . It forms an Orthogonal Basis since,

where is the Kronecker Delta. This result can be proven for by noting that the sum in equation is a Geometric Series,

Since is always a multiple of it follows that the numerator is zero,

The denominator is zero only if where is an integer. This cannot happen since , so,

If then,

this proves that equation .

The Cross Correlation Theorem

The Cross Correlation Theorem is a relationship between the Fourier Transform of the cross correlation, defined by equation and the Fourier Transforms of the two time series used in the cross correlation calculation,

where,

To derive equation consider the Inverse Fourier Transform of the time series and ,

Substituting these expressions into equation gives,

where the second step follows from equation . Equation follows by taking the Fourier Transform of the previous result,

proving the Cross Correlation Theorem defined by equation .

Discrete Fourier Transform Example

This section will work through an example calculation of a discrete Fourier Transform that can be worked

out by hand. The manual calculations will be compared with calculations performed using the FFT library

from numpy.

The Discrete Fourier Transform of a time series, represented by the column vector , into a column vector of Fourier coefficients, , can be represented by the linear equation,

where is the transform matrix computed from the Fourier basis functions. Each element of the matrix is the value of the basis function used in the calculation. It is assumed that the time series contains only points so that will be a matrix. The transform matrix only depends on the number of elements in the time series vector and is given by,

Assume an example time series of,

It follows that,

The Python code listing below uses the FFT implementation from numpy to confirm the calculation of

equation . It first defines the time series example data

. The Fourier Transform is then used to compute

.

In [1]: import numpy

In [2]: f = numpy.array([8, 4, 8, 0])

In [3]: numpy.fft.fft(f)

Out[3]: array([20.+0.j, 0.-4.j, 12.+0.j, 0.+4.j])

Cross Correlation Theorem Example

This section will work through an example calculation of cross correlation using the Cross Correlation Theorem with the goal of verifying an implementation of the algorithm in Python. Here use will be made of the time series vector and the transform matrix discussed in the previous section. An additional time series vector also needs to be considered, let,

First, consider a direct calculation of the cross correlation,

. The following python function

cross_correlate_sum(x, y) implements the required summation.

In [1]: import numpy

In [2]: def cross_correlate_sum(x, y):

...: n = len(x)

...: correlation = numpy.zeros(len(x))

...: for t in range(n):

...: for k in range(0, n - t):

...: correlation[t] += x[k] * y[k + t]

...: return correlation

...:

In [3]: f = numpy.array([8, 4, 8, 0])

In [4]: g = numpy.array([6, 3, 9, 3])

In [5]: cross_correlate_sum(f, g)

Out[5]: array([132., 84., 84., 24.])

cross_correlate_sum(x, y) takes two vectors, x and y, as arguments. It assumes that x and y are equal length and after allocating storage for the result performs the double summation required to compute the cross

correlation for all possible time lags, returning the result. It is also seen that

operations are required to perform the calculation, where

is the length of the input vectors.

Verification of following results requires a manual calculation. A method of organizing the calculation to facilitate

this is shown in table below. The table rows are constructed from the elements of

and the time lagged

elements of for each value .

The column is indexed by the element number

. The time lag shift performed on the vector results

in the translation of the components to the left that increases for each row as the time lag increases. Since the number of elements in

and is finite the time lag shift will lead to some

elements not participating in for some time lag values. If there is no element

in the table at a position the value of or at that position

is assumed to be .

| 0 | 1 | 2 | 3 | ||||

|---|---|---|---|---|---|---|---|

| 8 | 4 | 8 | 0 | ||||

| 6 | 3 | 9 | 3 | ||||

| 6 | 3 | 9 | 3 | ||||

| 6 | 3 | 9 | 3 | ||||

| 6 | 3 | 9 | 3 |

The cross correlation, , for a value of the time lag, , is computed for each by multiplication of and and summing the results. The outcome of this calculation is shown as the column vector below where each row corresponds to a different time lag value.

The result is the same as determined by cross_correlate_sum(x,y).

Now that an expectation of a result is established it can be compared with the a calculation using using the Cross Correlation Theorem from equation where and are represented by Discrete Fourier Series. It was previously shown that the Fourier representation is periodic, see equation . It follows that the time lag shifts of will by cyclic as shown in the calculation table below.

| 0 | 1 | 2 | 3 | |

|---|---|---|---|---|

| 8 | 4 | 8 | 0 | |

| 6 | 3 | 9 | 3 | |

| 3 | 9 | 3 | 6 | |

| 9 | 3 | 6 | 3 | |

| 3 | 6 | 3 | 9 |

Performing the calculation following the steps previously described has the following outcome,

This is different from what was obtained from a direct evaluation of the cross correlation sum. Even though the result is different the calculation could be correct since periodicity of and was not assumed when the sum was evaluated. Below a calculation using the Cross Correlation Theorem implemented in Python is shown.

In [1]: import numpy

In [2]: f = numpy.array([8, 4, 8, 0])

In [3]: g = numpy.array([6, 3, 9, 3])

In [4]: f_bar = numpy.fft.fft(f)

In [5]: g_bar = numpy.fft.fft(g)

In [6]: numpy.fft.ifft(f_bar * g_bar)

Out[6]: array([132.+0.j, 72.+0.j, 132.+0.j, 84.+0.j])

In the calculation and are defined and their Fourier Transforms are computed. The Cross Correlation Theorem is then used to compute the Fourier Transform of the cross correlation, which is then inverted. The result obtained is the same as obtained in the manual calculation verifying the results. Since the calculations seem to be correct the problem must be that periodicity of the Fourier representations and was not handled properly. This analysis artifact is called aliasing. The following example attempts to correct for this problem.

The cross correlation calculation table for periodic and can be made to resemble the table for the nonperiodic case by padding the end of the vectors with zeros, where is the vector length, creating a new periodic vector of length . This new construction is shown below.

| 0 | 1 | 2 | 3 | 4 | 5 | 6 | ||||

|---|---|---|---|---|---|---|---|---|---|---|

| 8 | 4 | 8 | 0 | |||||||

| 6 | 3 | 9 | 3 | 0 | 0 | 0 | ||||

| 6 | 3 | 9 | 3 | 0 | 0 | 0 | 6 | |||

| 6 | 3 | 9 | 3 | 0 | 0 | 0 | 6 | 3 | ||

| 6 | 3 | 9 | 3 | 0 | 0 | 0 | 6 | 3 | 9 | |

| 3 | 9 | 3 | 0 | 0 | 0 | 6 | 3 | 9 | 3 | |

| 9 | 3 | 0 | 0 | 0 | 6 | 3 | 9 | 3 | 0 | |

| 3 | 0 | 0 | 0 | 6 | 3 | 9 | 3 | 0 | 0 |

It follows that,

The first elements of this result are the same as obtained calculating the sum directly. The same result is achieved by discarding the last elements. Verification is shown below where the previous example is extended by padding the tail of both and with three zeros.

In [1]: import numpy

In [2]: f = numpy.array([8, 4, 8, 0])

In [3]: g = numpy.array([6, 3, 9, 3])

In [4]: f_bar = numpy.fft.fft(numpy.concatenate((f, numpy.zeros(len(f)-1))))

In [5]: g_bar = numpy.fft.fft(numpy.concatenate((g, numpy.zeros(len(g)-1))))

In [6]: numpy.fft.ifft(numpy.conj(f_bar) * g_bar)

Out[7]:

array([1.3200000e+02+0.j, 8.4000000e+01+0.j, 8.4000000e+01+0.j,

2.4000000e+01+0.j, 4.0602442e-15+0.j, 4.8000000e+01+0.j,

4.8000000e+01+0.j])

The following function, cross_correlate(x, y), generalizes the calculation to vectors of arbitrary but equal lengths.

def cross_correlate(x, y):

n = len(x)

x_padded = numpy.concatenate((x, numpy.zeros(n-1)))

y_padded = numpy.concatenate((y, numpy.zeros(n-1)))

x_fft = numpy.fft.fft(x_padded)

y_fft = numpy.fft.fft(y_padded)

h_fft = numpy.conj(x_fft) * y_fft

cc = numpy.fft.ifft(h_fft)

return cc[0:n]

Autocorrelation

The autocorrelation, defined by equation , is the special case of the cross correlation of a time series with itself. It provides a measure of the dependence or similarity of its past and future. The version of the Cross Correlation Theorem for autocorrelation is given by,

is the weight of each of the coefficients in the Fourier Series representation of the time series and is known as the Power Spectrum.

When discussing the autocorrelation of a time series, , the autocorrelation coefficient is useful,

where and are the time series mean and standard deviation respectively. Below a Python implementation calculating the autocorrelation coefficient is given.

def autocorrelate(x):

n = len(x)

x_shifted = x - x.mean()

x_padded = numpy.concatenate((x_shifted, numpy.zeros(n-1)))

x_fft = numpy.fft.fft(x_padded)

r_fft = numpy.conj(x_fft) * x_fft

ac = numpy.fft.ifft(r_fft)

return ac[0:n]/ac[0]

autocorrelate(x) takes a single argument, x, that is the time series used in the calculation.

The function first shifts

x by its mean, then adds padding to remove aliasing and computes its FFT. Equation is

next used to compute the Discrete Fourier Transform of the autocorrelation which is inverted. The final result is

normalized by the zero lag autocorrelation which equals .

AR(1) Equilibrium Autocorrelation

The equilibrium properties of the AR(1) random process are discussed in some detail in Continuous State Markov Chain Equilibrium. AR(1) is defined by the difference equation,

where and the are identically distributed independent random variables.

The equilibrium mean and standard deviation, and are given by,

The equilibrium autocorrelation with time lag is defined by,

If equation is used to evaluate a few steps beyond an arbitrary time it is seen that,

Substituting the equation for into the equation for and that result into the equation for gives,

If this procedure is continued for steps the following is obtained,

It follows that the autocorrelation is given by,

To go further the summation in the last step needs to be evaluated. In a previous post it was shown that,

Using this result the summation term becomes,

since the are independent and identically distributed random variables. It follows that,

Evaluation of the equilibrium limit gives,

The last step follows from by assuming that so that and are finite. A simple form of the autocorrelation coefficient in the equilibrium limit follows from equation ,

remains finite for increasing only for .

AR(1) Simulations

In this section the results obtained for AR(1) equilibrium autocorrelation in the previous section will be compared with simulations. Below an implementation in Python of an AR(1) simulator based on the difference equation representation from equation is listed.

def ar_1_series(α, σ, x0=0.0, nsamples=100):

samples = numpy.zeros(nsamples)

ε = numpy.random.normal(0.0, σ, nsamples)

samples[0] = x0

for i in range(1, nsamples):

samples[i] = α * samples[i-1] + ε[i]

return samples

The function ar_1_series(α, σ, x0, nsamples) takes four arguments: α and σ from equation ,

the initial value of , x0, and the number of desired samples, nsamples. It begins by allocating storage

for the sample output followed by generation of nsamples values of

with the requested standard deviation, .

The samples are then created using the AR(1 ) difference equation, equation .

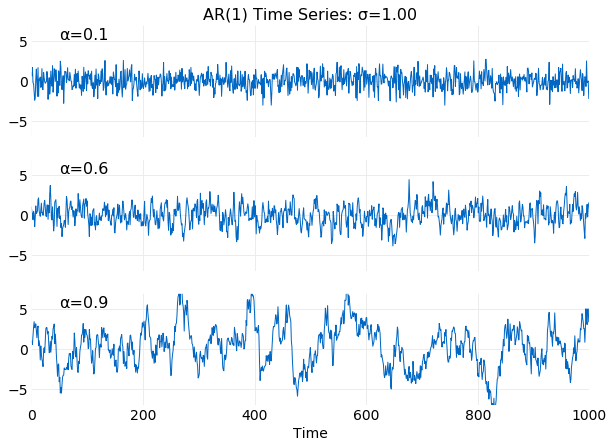

The plots below show examples of simulated time series with and values of satisfying . It is seen that for smaller values the series more frequently change direction and have smaller variance. This is expected from equation .

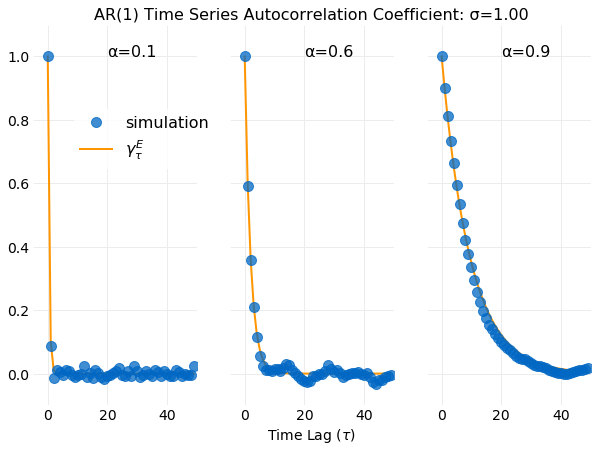

The next series of plots compare the autocorrelation coefficient from equation , obtained in

the equilibrium limit, with an autocorrelation coefficient calculation using the previously discussed autocorrelate(x) function on

the generated sample data. Recall that autocorrelate(x) performs a calculation using the Cross Correlation Theorem.

Equation does a good job of capturing the time scale for the series to become uncorrelated.

Conclusions

The Discrete Cross Correlation Theorem provides a more efficient method of calculating time series

correlations than directly evaluating the sums. For a time series of length N it decreases the cost

of the calculation from to by

use of the Fast Fourier Transform.

An interpretation of the cross correlation as the time lagged covariance of two random variables was presented

and followed by a discussion of the properties of Discrete Fourier Transforms needed to prove the

Cross Correlation Theorem. After building sufficient tools the theorem was derived by application of the

Discrete Fourier Transform to equation , which defines cross correlation.

An example manual calculation of a Discrete Fourier Transform was performed and compared with a calculation

using the FFT library form numpy. Next, manual calculations of cross correlation using a tabular method

to represent the summations were presented and followed by a calculation using the Discrete

Cross Correlation Theorem which illustrated the problem of aliasing. The next example calculation eliminated

aliasing and recovered a result equal to the direct calculation of the summations.

A dealiased implementation using numpy FFT libraries was then presented. Finally,

the Discrete Cross Correlation Theorem for the special case of the autocorrelation was

discussed and a numpy FFT implementation was provided and followed by an example calculation using the AR(1)

random process. In conclusion the autocorrelation coefficient in the equilibrium limit for AR(1) was evaluated

and shown to be finite only for values of the AR(1) parameter that satisfy

. This result is compared to direct simulations and shown to

provide a good estimate of the decorrelation time of the process.